This means that we can easily improve our app as we learn from what users actually say to the app. Quickly after we published the app, we realized users had many ways to say they didn’t know the sentence - “I have no idea,” “I forgot,” “what is it,” and even the good old, “idk.”Īdding these alternatives into the app was easy, since Dialogflow allows us to quickly add new variations as we go.

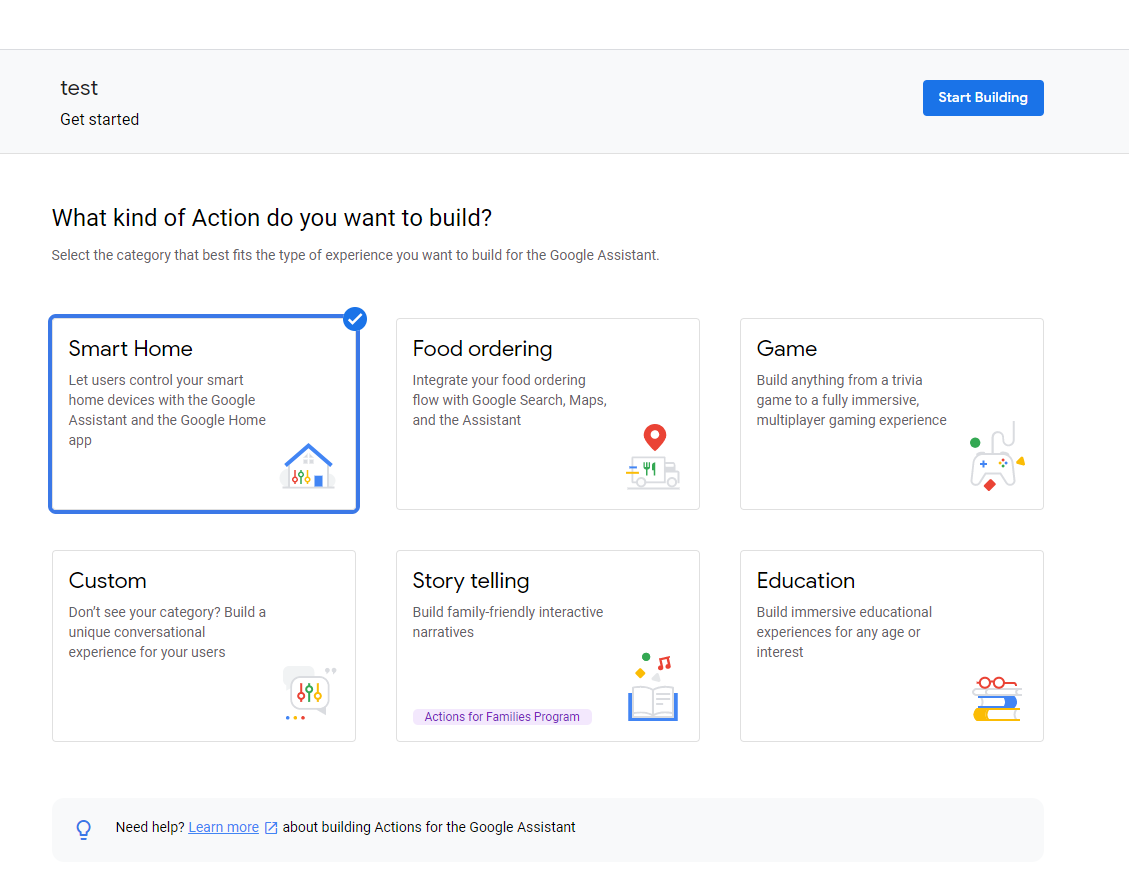

For instance, if a user didn’t know how to translate the sentence they were given, they could say, “I don’t know” to get the answer and skip to the next one. asking the user a yes or no question, and understanding the user response), and it would also let us prototype quickly.ĭialogflow’s built-in capabilities proved very useful to us. We decided to go with Dialogflow, as it could handle some of the flows for us (e.g. So we had to choose between Dialogflow and Actions SDK.ĭialogflow gives you a nice user interface for building conversation flows (in some cases you can even get away without writing any code), and also incorporates some AI to help you figure out the user intent.Īctions SDK gives you “bare-bone” access to user input, and it is up to you to provide a backend which will parse that input and generate the appropriate responses. In our case, however, we needed more power: we wanted to be able to keep track of the user’s progress, and actually mixing Spanish and English in a single app is quite a challenge, as you will see in a minute. This can be very useful for school teachers, who can easily create games for their students. When you use a template, you don’t need to write a single line of code, just fill-in some spreadsheet and the action is created for you, based on the information that you fill. The ready-made templates are great for use cases such as creating a Trivia Game or Flash Cards app. There are currently three approaches to building Assistant actions: ready-made templates, Dialogflow, and Actions SDK. We are going to cover the tech decisions that we made, and share our experience with the outcomes of our choices. However, the focus of this post is the technical part of creating an Assistant Action - the challenges that we had, the stack we chose, basically sharing with you how the architecture of a complete solution for providing a real-life, complex, Action on Google Assistant. We learned a lot throughout this process, and we will probably publish another post from the product / UX point of view in a few weeks. We sat together and designed a persona, which was the basis for creating the texts and possible dialog flows. Daniel was very excited about the idea, and we started collaborating. The next day, I pinged Daniel, and asked him if he was ready to embark on a new adventure. So when the Assistant responded to Ariella that it can’t teach her Spanish “ yet,” it struck me: why don’t I make that happen?! He had some very good points - people are lazy and would rather talk than type, and since it’s still the early days of the platform, there are many opportunities to have a big impact in the technology space. At some point, I heard her ask the device: “Hey Google, Teach me Spanish”, to which the device responded: “I’m sorry, I don’t know how to help with that yet.”Ĭoincidentally, earlier that same day, I read Daniel Gwerzman’s article in which he explains why now is a good time to build actions on Google.

She was very excited about it and tried all sort of things. Some months ago, my life partner Ariella got a new Google Home device. The Spanish Lesson Logo :) So how did this whole thing get started?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed